Table of Contents

Visual/Inertial Motion Estimation

Fundamental to the successful operation of UAVs for service and inspection tasks is their ability to estimate their ego-motion and map the environment. Usage in industrial inpection scenarios requires a high degree of robustness and accuracy yet payload, power and size constraints limit the choice for the onboard sensor suite. We developed a framework that tightly couples visual and inertial cues to achieve the required robustness and accuracy.

This page presents our efforts towards a general-purpose Simultaneous Localization and Mapping (SLAM) device.

Visual/Inertial SLAM Sensor

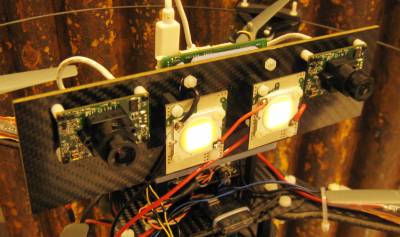

Visual/Inertial Motion Estimation Prototype

1st generation visual/inertial motion estimation system used in the Narcea field tests in 2012.